To get started, complete the following steps: (Optional) Studio set up for experiment tracking and the Amazon SageMaker Experiments Python SDK.Docker configured (SageMaker local mode) and the SageMaker Python SDK installed on your local computer.An AWS account configured with the AWS Command Line Interface (AWS CLI) to have sufficient permissions to run SageMaker training jobs.To run training jobs on a SageMaker managed environment, you need the following:

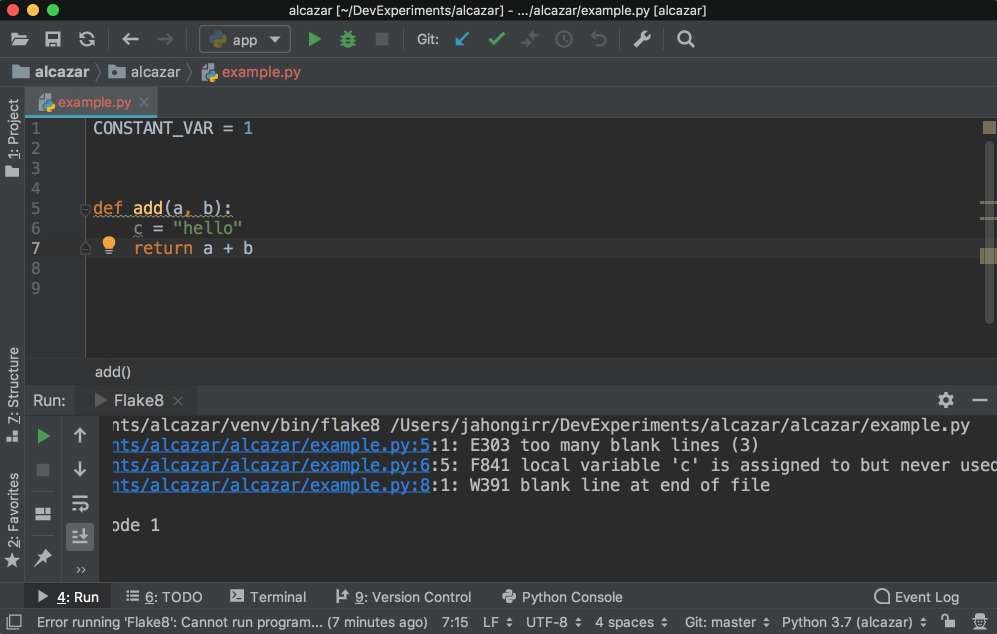

#Pycharm run with arguments code#

The code used in this post is available on GitHub. For this post, we use P圜harm for our IDE, but you can use your preferred IDE with no code changes. In this post, we show how you can use SageMaker to manage your training jobs and experiments on AWS, using the Amazon SageMaker Python SDK with your local IDE. Many data scientists and ML researchers prefer to use a local IDE such as P圜harm or Visual Studio Code for Python code development while still using SageMaker to train the model, tune hyperparameters with SageMaker hyperparameter tuning jobs, compare experiments, and deploy models to production-ready environments. For more details, see Use Amazon SageMaker Studio Notebooks. SageMaker Studio provides persistent storage, which enables you to view and share notebooks even if the instances that the notebooks run on are shut down. You can launch quickly because you don’t need to set up compute instances and file storage beforehand. Within Studio, you can also use Studio notebooks, which are collaborative notebooks (the view is an extension of the JupyterLab interface). Developers can write code, track experiments, visualize data, and perform debugging and monitoring all within a single, integrated visual interface, which significantly boosts developer productivity. Your team can quickly and easily train and tune models, move back and forth between steps to adjust experiments, compare results, and deploy models to production-ready environments.Īmazon SageMaker Studio offers an integrated development environment (IDE) for ML. Amazon SageMaker, a fully managed ML service, enables organizations to put ML ideas into production faster and improve data scientist productivity by up to 10 times.

This is due in part to the amount of experimentation required before finalizing a version of a model. However, the ML model development lifecycle is significantly different from an application development lifecycle. As more machine learning (ML) workloads go into production, many organizations must bring ML workloads to market quickly and increase productivity in the ML model development lifecycle.